The case of the Fiddler heisenbug

June 18, 2014 Leave a comment

There is a presentation I give to our graduates during their first week with us, the second slide is:

This is taken from the multi-media overload that was U2s Zoo TV tour. I use it to try to get our graduates to accept that they are really back at the start of their learning process. This is pretty much how I felt a week or two back when one of our consultants said that they were seeing lots of HTTP 401 authentication traffic while our application was running. I’d personally spent a lot of time over the years trying to make sure that we were as efficient as possible so I was sceptical to say the least…

Background

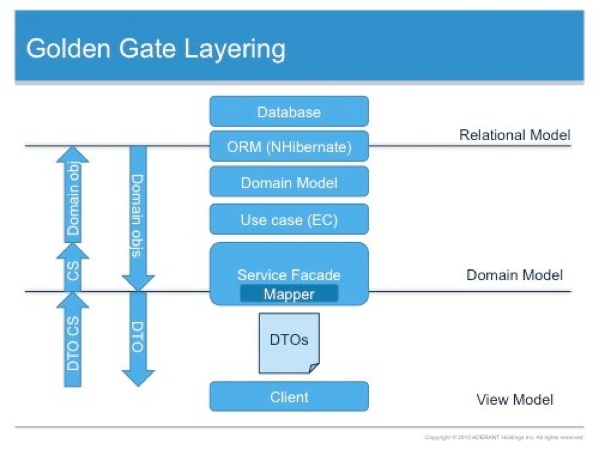

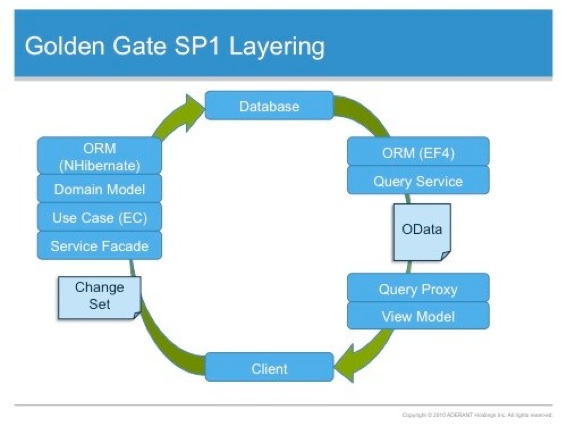

The services architecture for the product I work on follows the Command Query Responsibility Separation approach which I’ve talked about before. In summary we fetch data from an OData service provided by WCF Data Services and then make updates via a suite of services implemented using regular SOAPy WCF. We closely monitor the message exchange between our applications and services to ensure that we aren’t too chatty, messages aren’t too big and so on – we do this using the excellent Fiddler. Many moons ago, I spent quite some time getting my head around how to correctly configure IIS and WCF to use Kerberos to allow the services to be scaled out over a web farm. By now I’ve run through this on numerous test environments and real world environments so I was pretty confident I know how it works.

The Problem

Our software runs on-premise within the walled garden of the corporate network. We support some of the largest law firms in the world and so on occasion have to deal with some very wide area networks. The connection from desktop to server can take place over long distances with the characteristics of high latency and low bandwidth; any messaging overhead can be painful. For years now we’ve used Fiddler to look at our services as all the call activated services use HTTP. At one client, Fiddler was not working [which turned out to be a conflict with the McAfee software they used] and so they used Wireshark instead. When observing the HTTP traffic in Wireshark, our consultants and the client saw many HTTP 401 authentication responses, far more than we expected. Each 401 response results in additional latency delay and requires additional messages to be exchanged between the client and the server. In our testing to date, we believed we had tuned the services to require only a single 401 authentication response and then to cache and present the credentials on each subsequent request.

TL;DR

To stop a WCF Data Services request, secured using Windows Authentication, requiring authentication on every call – you need to set the PreAuthenticate flag to true on the HttpWebRequest via the SendingRequest2 event on the generated context. Fiddler (and Web Proxy in the Microsoft Message Analyzer) hides this from you because it implements a connection pool of Keep Alive connections.

Reproducing the issue

The first task was to reproduce the behaviour inside one of our test environments. I’m fortunate to have a very well spec’d HP Z420 on my desk which is a great Hyper-V server. Inside Hyper-V I have a private domain set up which has a couple of load balanced application servers running our software. First off, I ran the client software on both Windows 7 and Windows 8.1 with Fiddler running in the background, no sign of the additional 401s. I then switched over to using a lower level network monitoring but rather than using Wireshark, I decided to try out the Microsoft Message Analyzer. This is Microsoft’s replacement for the Network Monitor tool, it provides a number of different filters, two of which were of interest:

- web proxy – same deal as Fiddler, looking at HTTP

- local link layer – all traffic on the NIC

Using the web proxy produced the same results as Fiddler however using the local link layer filter showed lots of additional 401 responses – when I ran the Message Analyzer with both web proxy and the local link layer filters there was no additional 401s. We had hit a Heisenbug, when observing the HTTP traffic through a web proxy, the proxy was changing the behaviour of the traffic.

Confirm our current understanding

My faith in our current collective understanding of what was happening was pretty shaken so I ran through the various settings that I previously thought would avoid these 401s:

1. Is the URL of the service trusted? Windows must consider the service URL to be trusted to pass Kerberos tickets. Any easy way to check the zone of any URL is the following code snippet:

var zone = System.Security.Policy.Zone.CreateFromUrl("http://wsakl001013.ap.aderant.com/Expert_Local");

Console.WriteLine(zone.SecurityZone);

If necessary, add the service host URL or a matching pattern to the Local Intranet Zone via IE:

In this example, *.aderant.com has been added to the local intranet zone.

2. Are the load balanced services running as a domain account? Does this account have an appropriate HTTP SPN registered against it?

3. Do the various IIS web applications have the useAppPoolCredentials flag set in configuration? This instructs IIS to expect the Kerberos SGT (service granting ticket) to be encrypted using the credentials of the account used by the mapped application pool, rather than the default machine account.

4. Is Kerberos configured to use a transport session rather than a connection per call for authentication? This is set in IIS against the web application using the authPersistNonNTLM setting.

This adds a Persistent-Auth header to the HTTP response (seen here using Message Analyzer):

These settings are available from within the IIS Manager using the Configuration Editor:

Navigate to the system.webServer/security/authentication/windowsAuthentication settings:

Set the properties as required. If you want to programmatically set these values via script, IIS will helpfully generate the scripts for you. Look over on the right hand side of the Configuration Editor and you’ll see a ’Generate Script’ option.

Clicking on this will generate a change script for you in a number of technologies, I tend to favour PowerShell:

All this checked out on my environment but I wanted to ensure that NTLM was not in play (here). To do this I enabled NTLM logging on the domain controller using group policy. Using gpedit.msc, I enabled the ‘Network Security: Restrict NTLM: Audit Incoming NTLM Traffic’ and ‘Network Security: Restrict NTLM: Audit NTLM authentication in this domain’ policies [under Windows Settings, Security Settings, Local Policies, Security Options]:

Interesting it showed that there was unexpected NTLM traffic – from the AppFabric services to the SQL Server. The MSSQLService was set-up to run as a domain account, service.sql, but the appropriate SPN had not been mapped to that account:

> setspn –a MSSQLSvc/SqlServer2012.expert.local:1433 service.sql

> setspn –a MSSQLSvc/SqlServer2012:1433 service.sql

I mapped both the FQDN and the NETBIOS name formats just to be sure. This resolved the issue and I no longer saw NTLM traffic.

What Next?

At this point I thought the environment was configured as it should be but I was still seeing the additional 401s. After a lot of searching and head scratching I came across this post from Fiddler author, Eric Lawrence. The rub being:

Keep-Alive

In some cases, the time required to open a new network connection to the server is greater than the time required to send the request and download the response. Therefore, if the client opens a new connection for every request, the application’s performance is greatly degraded. The practice of reusing a single TCP/IP connection for multiple requests is called “keep-alive” and it’s the default behaviour in HTTP/1.1. However, clients or servers may choose to disable keep-alive by either sending a Connection: close header or by abruptly closing the connection after each transaction.

Fiddler maintains a “connection pool” of idle keep-alive connections to the server. When the a client request comes in, this pool is first checked to determine if an existing connection is available on which the request can be sent. Even if the client specifies a Connection: close request header, that only causes Fiddler to close the client’s connection after the response is sent—the server connection is returned to the pool (unless it too disabled keep-alive).

What this means is that if your client isn’t using Keep-Alive connections, its performance can be severely impacted. However, when Fiddler is introduced, performance is improved because “expensive” server connections are reused.(Since Fiddler and the client are (typically) running on the same computer, establishing a new connection from the client to Fiddler is very fast.)

The fix for this problem is simple: Ensure that your client is using KeepAlive connections. That’s as simple as:

- Ensure that you’re using HTTP/1.1

- Ensure that you haven’t disabled Keep-Alive (e.g. set the KeepAlive property of the HTTPWebRequest object to true)

- Don’t send Connection: Close headers

Note that creating connections to servers can be even more expensive than the simple TCP/IP establishment cost. First, there’s TCP/IP Slow-Start, a congestion-management feature of the protocol that means that new connections have a slower transfer rate than longer-lived connections. Next, if you’re using HTTPS, there’s an expensive cryptographic handshake which must be performed on each new connection. Lastly, if your connections use either the NTLM or Negotiate authentication protocols, you may find that each new connection requires a 3-step handshake (e.g. the server sends a HTTP/401 challenge, the client resends the request, the server sends another HTTP/401 challenge, the client resends the request with a challenge-response, and the server finally sends a HTTP/200). Because these are “connection-oriented” authentication protocols, subsequent requests over an existing connection may be able to avoid these extra round-trips.

Here is the heisenbug, Fiddler is maintaining a Keep-Alive connection to the server even though my call may not be.

So how does this relate to the WCF service calls? For the basicHttpBinding, the Keep-Alive behaviour is enabled by default, it can optionally be turned off via a custom binding, see here.

Back to Basics

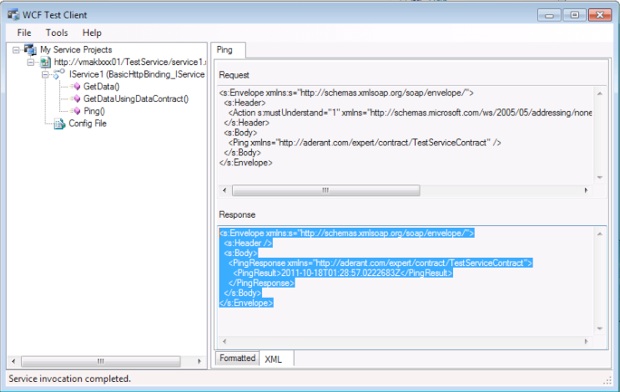

At this point I was still convinced I should not be seeing those additional 401s, so I decided to build a very simple secured WCF service and generate a proxy to the standard OData service we use.

Here is a WCF Service that simply says Hello to the calling Windows user.

WCF Configuration as follows:

Visual Studio created a service reference for me an I simply called the service a number of times: both reusing the proxy as well as closing the proxy and recreating it:

The link layer trace was as follows:

This was as expected, a single 401 but then 200s on subsequent calls. Kerberos was being used successfully and a transport level session was established! Just for completeness I could see the HTTP Keep-Alive header in the POST:

OK, on to the WCF Data Service. Again in Visual Studio I generated a service reference then:

This resulted in:

And the following trace:

At last here was the repeated 401/200 behaviour.

I checked for the Keep-Alive header in the request:

And looked for the Persistent-Auth header in the response:

Both present.

More head scratching.

More searching.

Then I posted this question to the Microsoft WCF Data Services forum.

While waiting for an answer, a colleague and I took at look at the System.Data.Services.Client.DataServiceContext base class for the generated context object. Working through that code, I came across the HttpWebRequest class which had a PreAuthenticate property which looked exactly what I wanted. A little more digging and then I found I could do this:

var context = new ExpertDbContext(…

context.Credentials = CredentialCache.DefaultNetworkCredentials;

context.SendingRequest2 += context_SendingRequest2;

static void context_SendingRequest2(object sender, SendingRequest2EventArgs e) {

((HttpWebRequestMessage)e.RequestMessage).HttpWebRequest.PreAuthenticate = true;

}

This was it!

Testing the code with this small change and the 401s were gone from the WCF Data Service traffic. Just as I was grabbing a celebratory cup of coffee, a colleague asked if I had seen the response to my question on the forum? I had not; it validated the above approach – Thank you Fred Bao.

Wrapping Up

This took about a week elapsed to work through, we’ve now updated our query service (OData) proxy to set the PreAuthenticate flag and can see improved system performance, particularly over constrained WAN connections. That Fiddler hid this really threw me, heisenbugs are really hard to dealt to.